AI Research Scientist Manager · LLM Pre-training Data · Meta Superintelligence Labs

I lead the data research team at Meta SuperIntelligence Labs. We build data across pre-training, mid-training, and post-training for frontier Muse models. Meet Muse Spark!

Previously, I built code LLMs at AWS AI Labs (Kiro) and studied at Stanford in the Stanford NLP Group (w/ Chris Potts), the University of Michigan (w/ David Jurgens and Kevyn Collins-Thompson), and Shanghai Jiao Tong University.

I value contributing to the research community. I run the Deep Learning for Code workshop series (ICLR’23, ICLR’25, NeurIPS’25, ICML’26) and serve as area chair, workshop co-organizer, and tutorial presenter at major venues.

Selected Publications

-

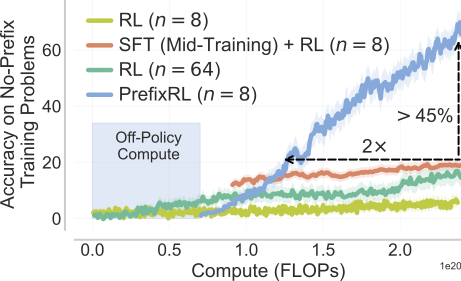

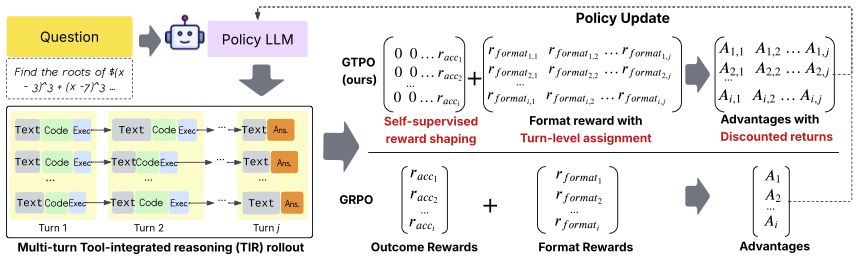

Reuse your FLOPs: Scaling RL on Hard Problems by Conditioning on Very Off-Policy PrefixesICML, 2026

Reuse your FLOPs: Scaling RL on Hard Problems by Conditioning on Very Off-Policy PrefixesICML, 2026 -

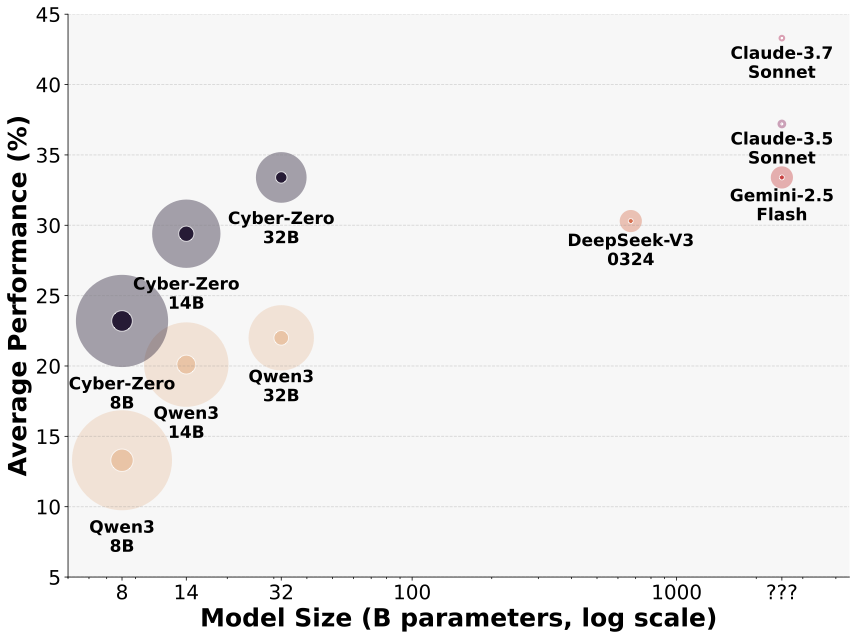

Cyber-Zero: Training Cybersecurity Agents without RuntimeICLR, 2026Wins the first prize in the CSAW 2025 agentic automated CTF challenge

Cyber-Zero: Training Cybersecurity Agents without RuntimeICLR, 2026Wins the first prize in the CSAW 2025 agentic automated CTF challenge -

Services

Organizer/Program Committee/Reviewer

- Lead organizer of the Deep Learning for Code (DL4C) workshop at ICLR'23, ICLR'25, NeurIPS'25, and ICML'26

- Co-organizer of the second LLM4Code workshop at ICSE'25

- Area Chair of ARR

- Outstanding Reviewer at ACL'21

- Current or past reviewer of NeurIPS, ICML, ICLR, ARR/*ACL, COLM, ICWSM, WebSci, AAAI, and many workshops

Teaching Assistant

- CS 224U Natural Language Understanding, Stanford University

- Applied Machine Learning (Coursera MOOC by the University of Michigan), University of Michigan (founding and head TA, 300k+ enrollment)

- Introduction to Programming and Academic Writing, Shanghai Jiao Tong University