|

I am currently a Tech Lead Manager/Senior Applied Scientist at AWS AI Labs in the Amazon Q Developer team. We work on large language models (LLM) for code. Please email me for oppotunities in our team. Previously, I was at Stanford and I was part of the Stanford NLP Group, advised by Prof. Chris Potts. Before that, I was at the University of Michigan, working with Prof. David Jurgens and Prof. Kevyn Collins-Thompson. Earlier, I studied at Shanghai Jiao Tong University. Email: zijwang@cs.stanford.edu | Google Scholar | Follow @zijianwang30 |

|

|

|

*=equal contribution; †=author is an intern

|

Hantian Ding, Zijian Wang, Giovanni Paolini, Varun Kumar, Anoop Deoras, Dan Roth, and Stefano Soatto ICML, 2024 paper / summary / 机器之心 (in Chinese) |

|

Yuhao Zhang†, Shiqi Wang, Haifeng Qian, Zijian Wang, Mingyue Shang, Linbo Liu, Sanjay Krishna Gouda, Baishakhi Ray, Murali Krishna Ramanathan, Xiaofei Ma, and Anoop Deoras arXiv, 2024 paper |

|

Ben Athiwaratkun*, Shiqi Wang*, Mingyue Shang*, Yuchen Tian, Zijian Wang, Sujan Kumar Gonugondla, Sanjay Krishna Gouda, Rob Kwiatowski, Ramesh Nallapati, and Bing Xiang Findings of ACL, 2024 paper |

|

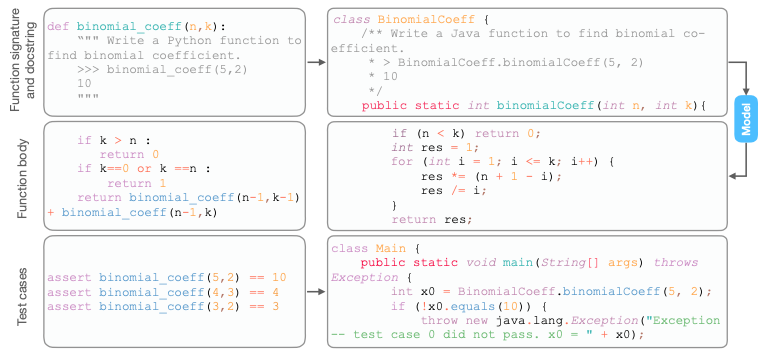

Yangruibo Ding*†, Zijian Wang*, Wasi Ahmad*, Murali Krishna Ramanathan, Ramesh Nallapati, Parminder Bhatia, Dan Roth, and Bing Xiang LREC-COLING, 2024 paper |

|

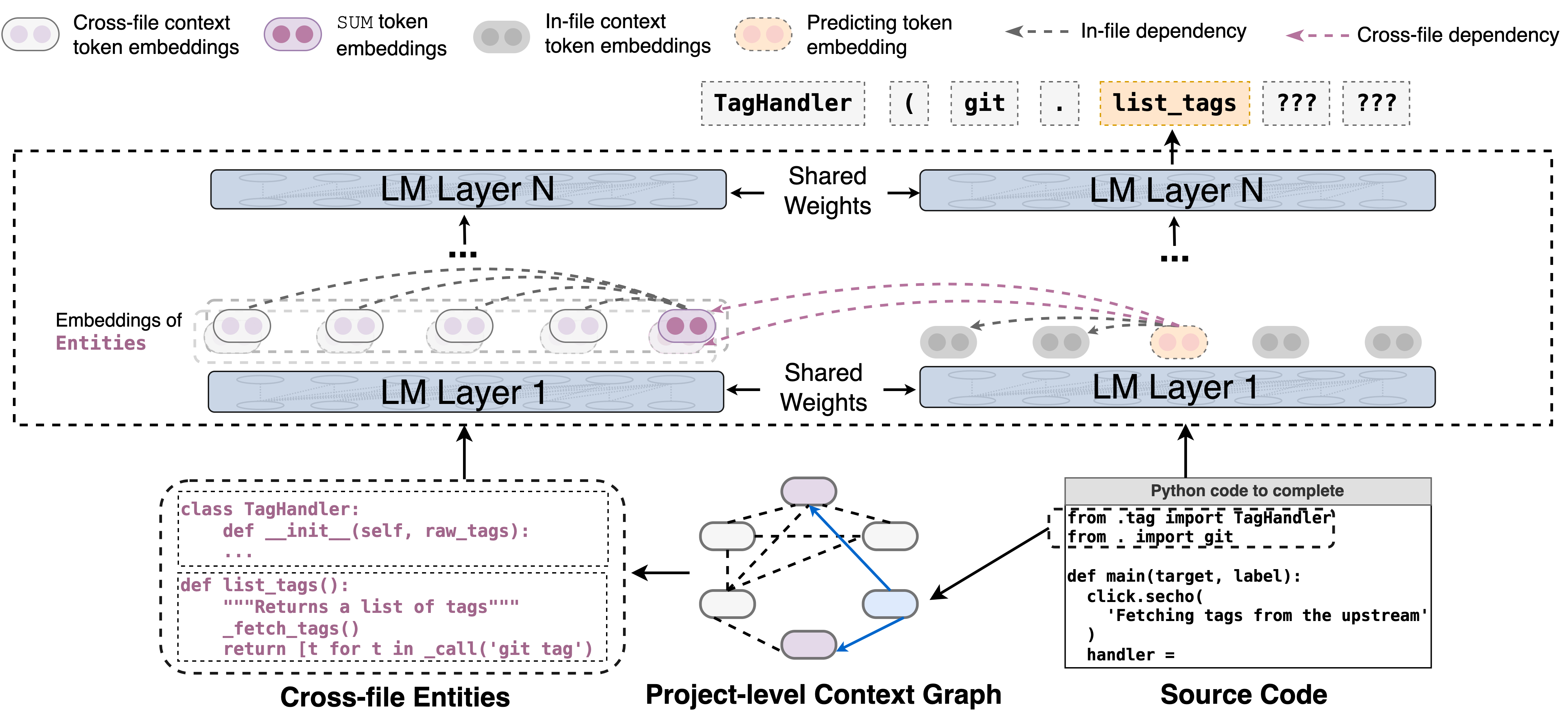

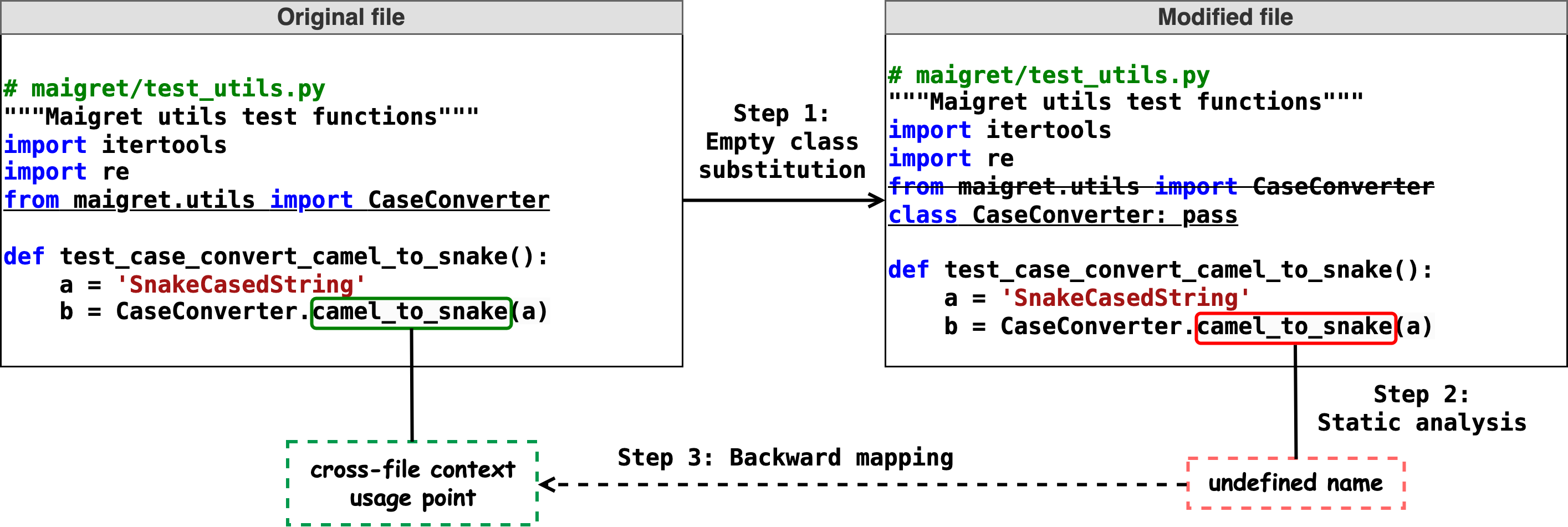

Yangruibo Ding*†, Zijian Wang*, Wasi Ahmad*, Hantian Ding, Ming Tan, Nihal Jain, Murali Krishna Ramanathan, Ramesh Nallapati, Parminder Bhatia, Dan Roth, and Bing Xiang NeurIPS (Datasets and Benchmarks Track), 2023 paper/webpage/data/code |

|

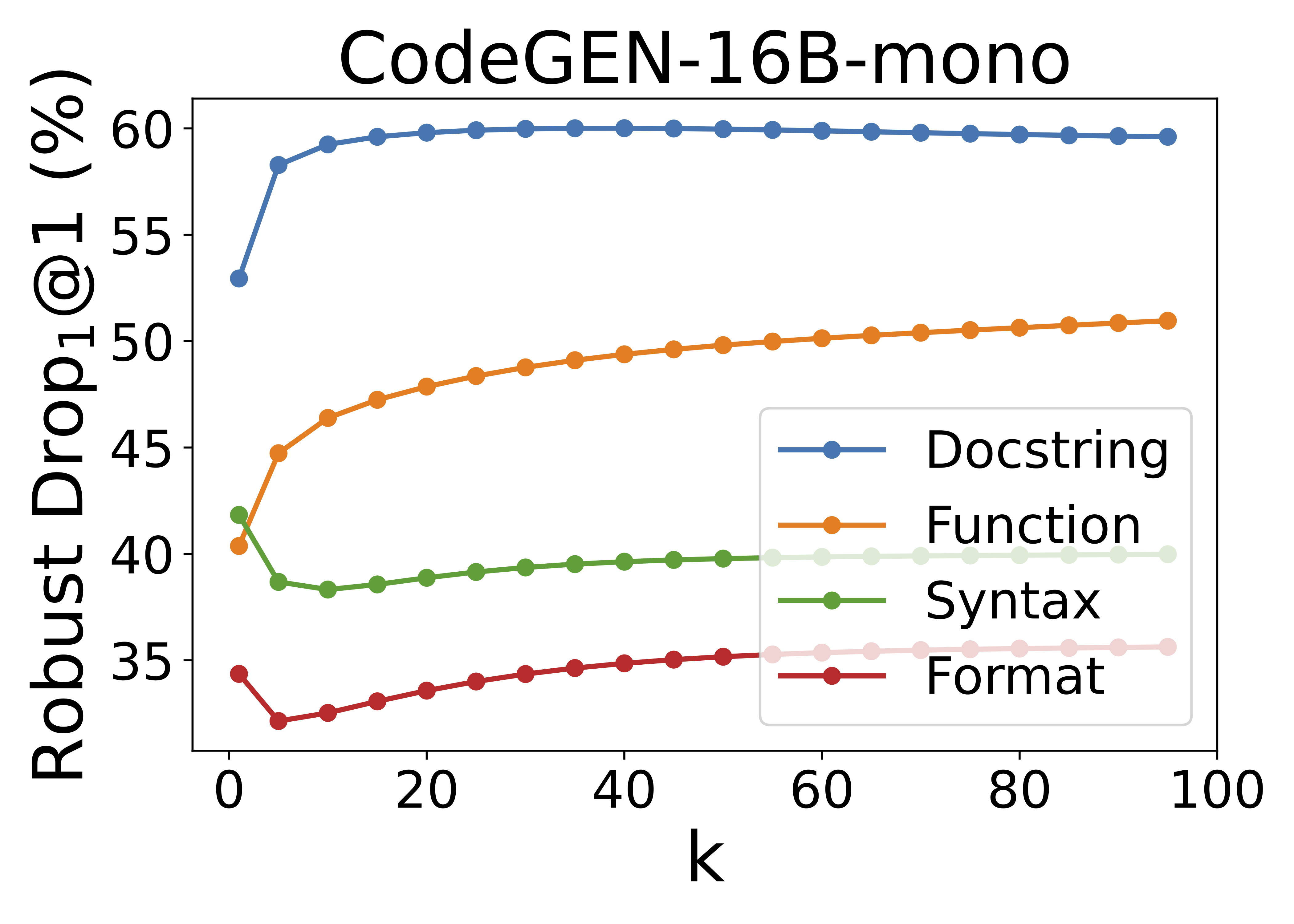

Shiqi Wang*, Zheng Li*†, Haifeng Qian, Chenghao Yang, Zijian Wang, Mingyue Shang, Varun Kumar, Samson Tan, Baishakhi Ray, Parminder Bhatia, Ramesh Nallapati, Murali Krishna Ramanathan, Dan Roth, and Bing Xiang ACL, 2023 paper / code + data |

|

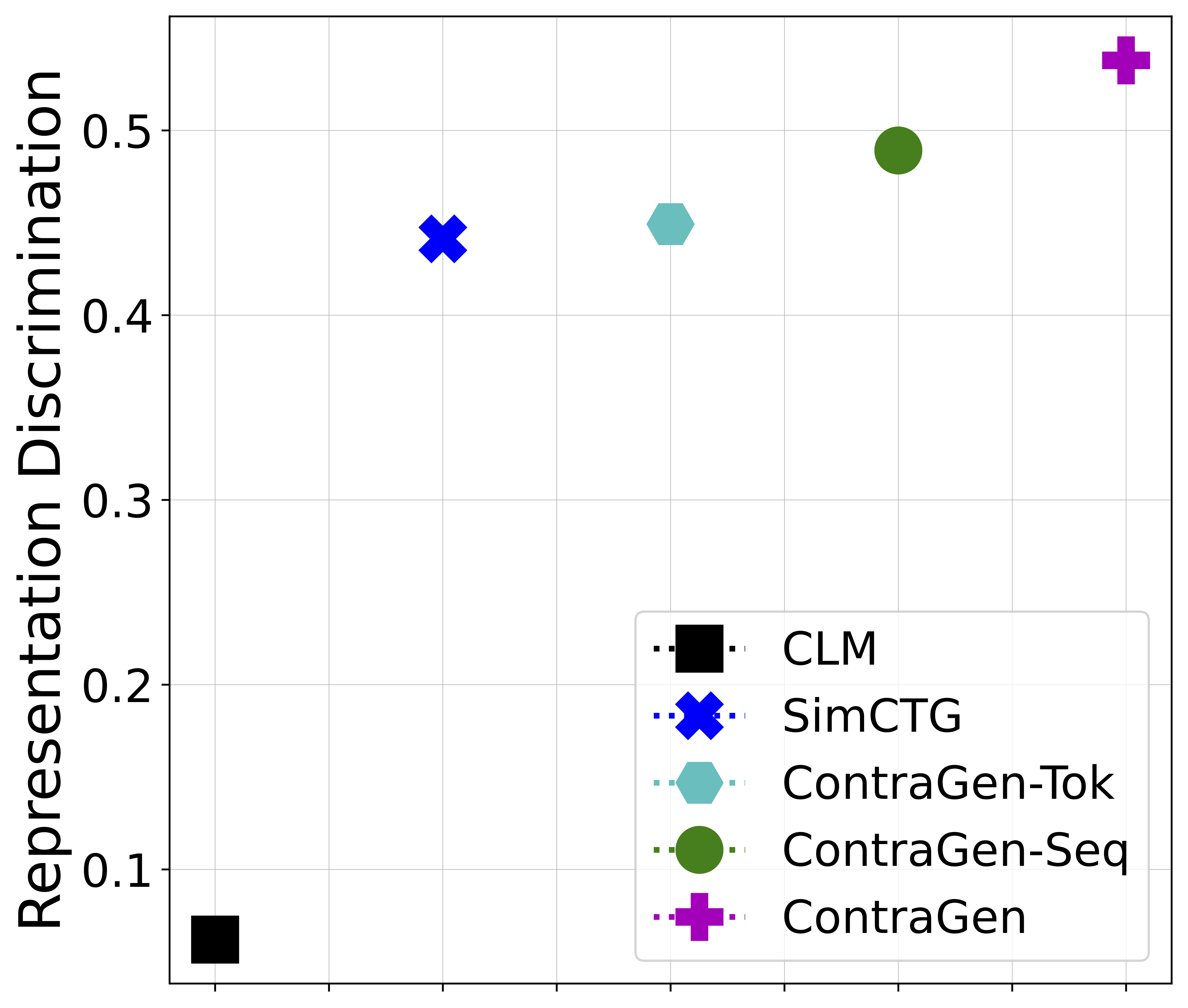

Nihal Jain*†, Dejiao Zhang*, Wasi Ahmad*, Zijian Wang, Feng Nan, Xiaopeng Li, Ming Tan, Ramesh Nallapati, Baishakhi Ray, Parminder Bhatia, Xiaofei Ma, and Bing Xiang ACL, 2023 paper |

|

Hantian Ding, Varun Kumar, Yuchen Tian, Zijian Wang, Rob Kwiatkowski, Xiaopeng Li, Murali Krishna Ramanathan, Baishakhi Ray, Parminder Bhatia, Sudipta Sengupta, Dan Roth and Bing Xiang ACL (Industry), 2023 paper |

|

Xiaokai Wei, Sujan Gonugondla, Wasi Ahmad, Shiqi Wang, Baishakhi Ray, Haifeng Qian, Xiaopeng Li, Varun Kumar, Zijian Wang, Yuchen Tian, Qing Sun, Ben Athiwaratkun, Mingyue Shang, Murali Krishna Ramanathan, Parminder Bhatia, and Bing Xiang ESEC/FSE, 2023 paper |

|

Ben Athiwaratkun, Sanjay Krishna Gouda, Zijian Wang, ...19 other authors..., Sudipta Sengupta, Dan Roth, and Bing Xiang ICLR, 2023 paper / code + data |

|

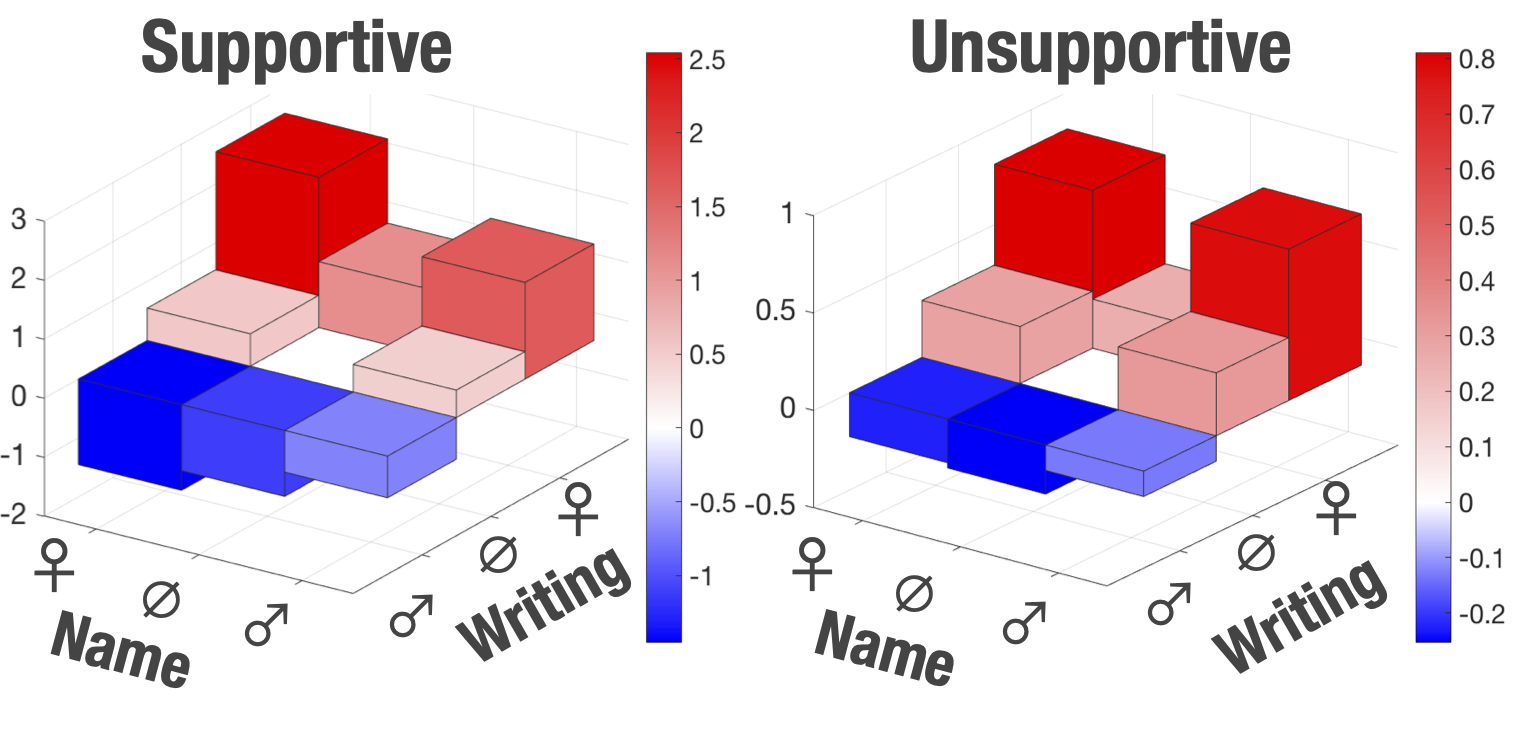

Yuantong Li†, Xiaokai Wei*, Zijian Wang*, Shen Wang*, Xiaofei Ma, Parminder Bhatia, and Andrew Arnold 4th Workshop on Gender Bias in Natural Language Processing at NAACL, 2022 paper |

|

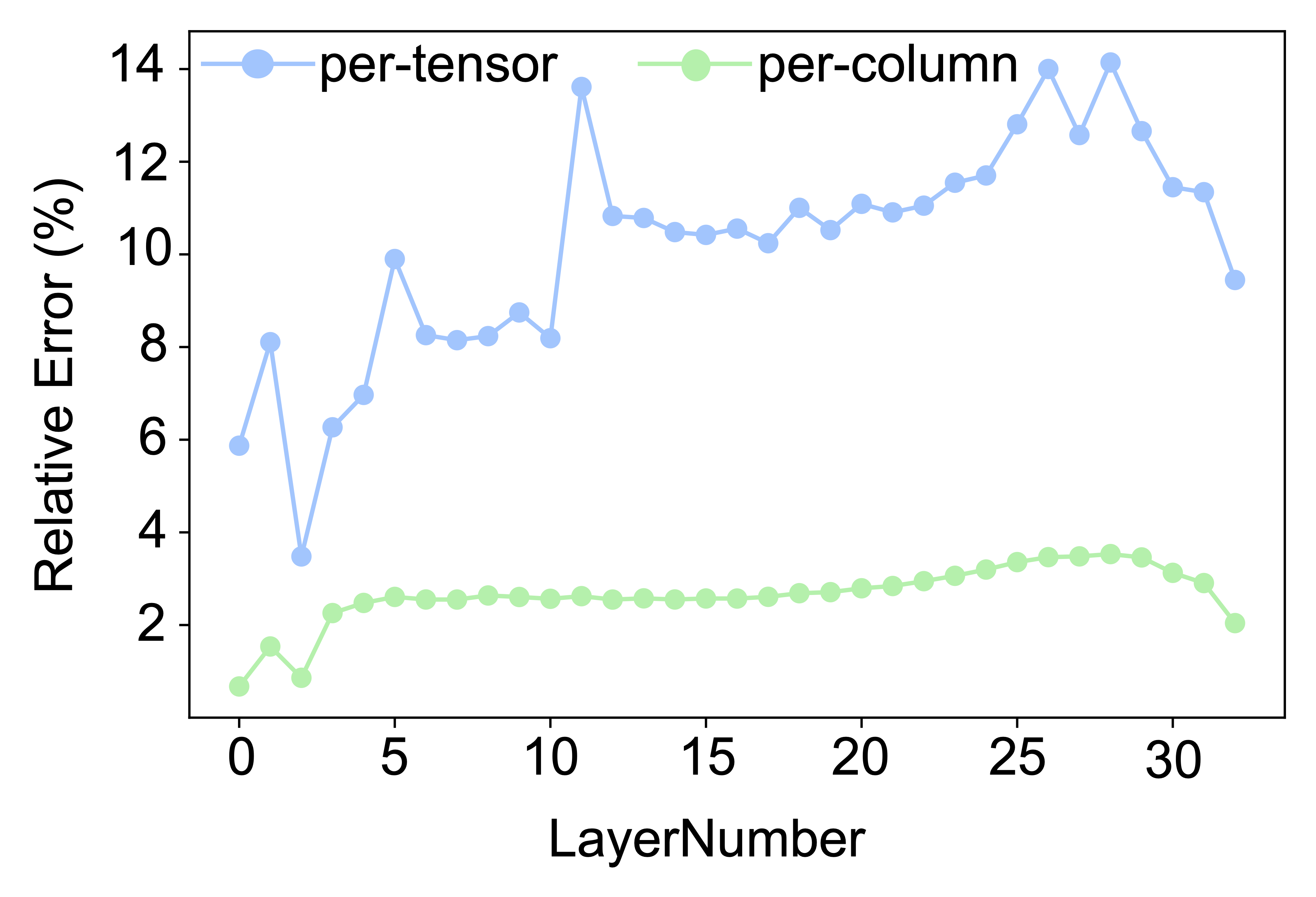

Simone Bombari†, Alessandro Achille, Zijian Wang, Yu-Xiang Wang, Yusheng Xie, Kunwar Yashraj Singh, Srikar Appalaraju, Vijay Mahadevan, and Stefano Soatto arXiv, 2022 paper |

|

Zheng Li*†, Zijian Wang*, Ming Tan, Ramesh Nallapati, Parminder Bhatia, Andrew Arnold, Bing Xiang, and Dan Roth ACL, 2022 paper / code |

|

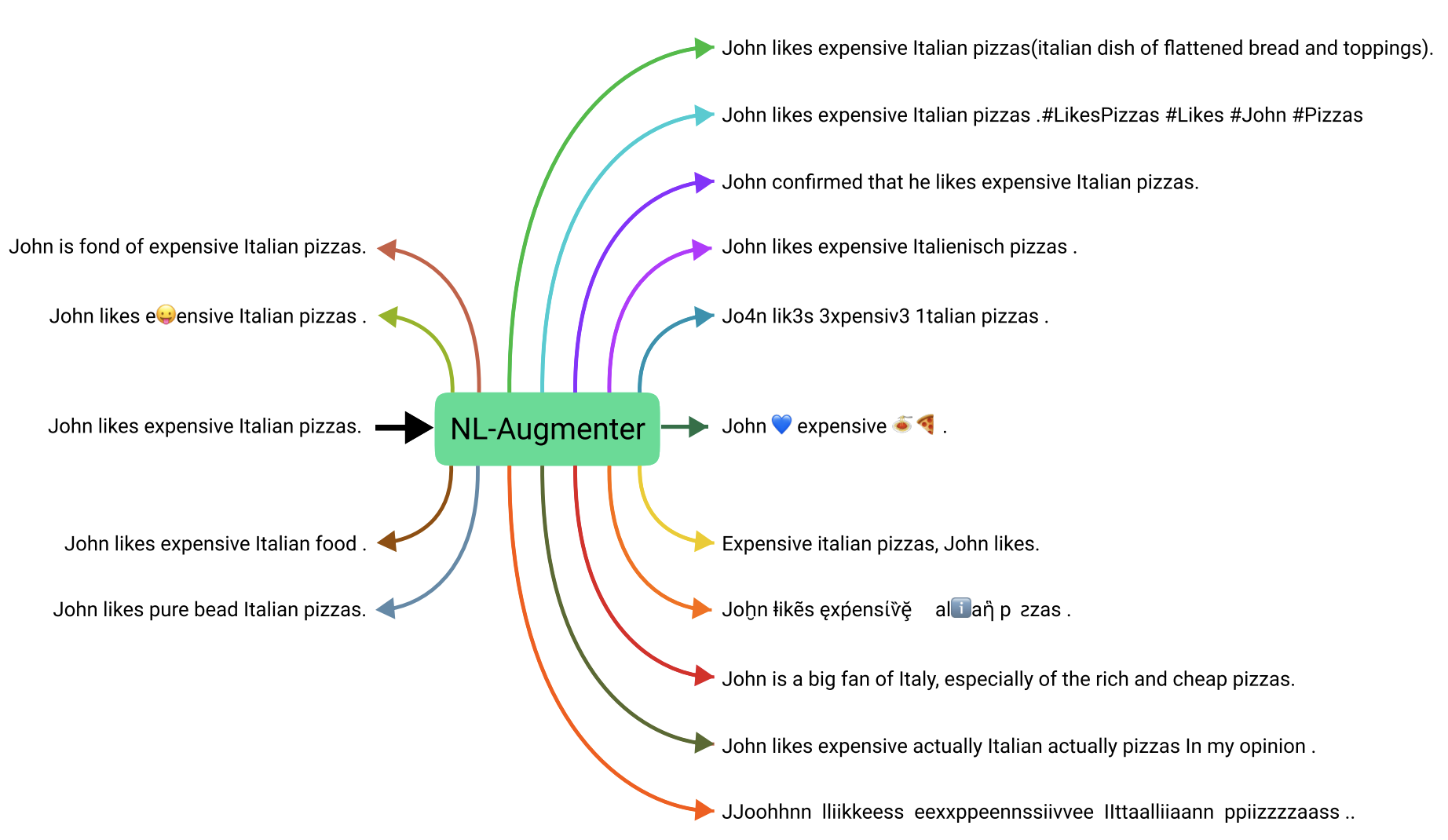

The NL-Augmenter Team arXiv, 2021 paper / code + data |

|

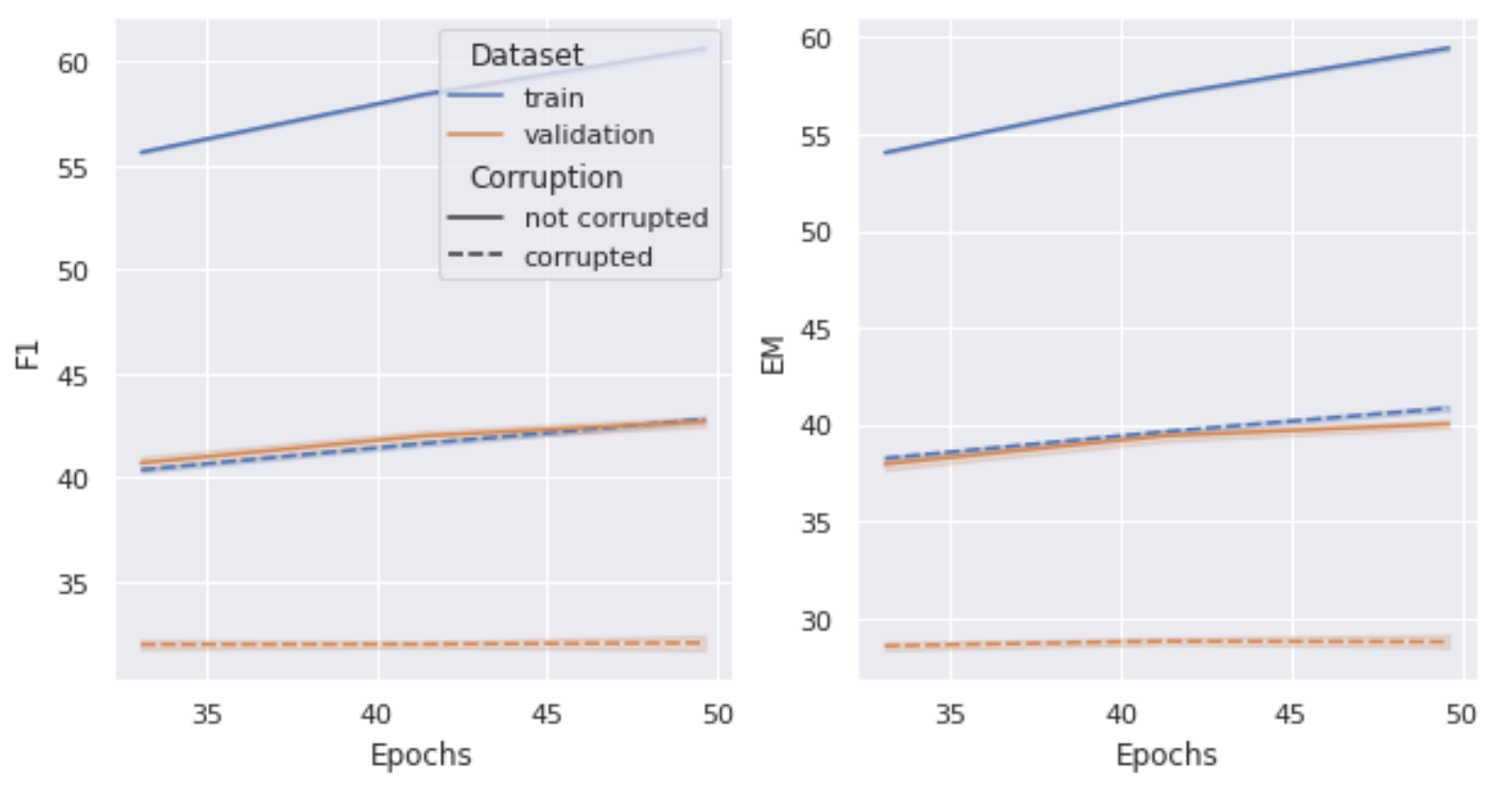

Elisa Kreiss*, Zijian Wang*, and Christopher Potts CoNLL, 2020 paper / code + data / video |

|

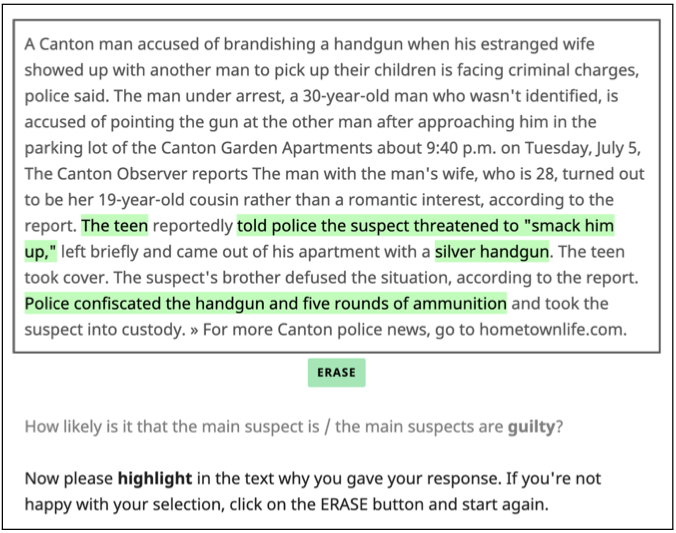

Zijian Wang and Christopher Potts EMNLP-IJCNLP, 2019 paper / code + data |

|

Peng Qi, Xianwen Lin*, Leo Mehr*, Zijian Wang*, and Christopher D. Manning EMNLP-IJCNLP, 2019 paper / code / blog post |

|

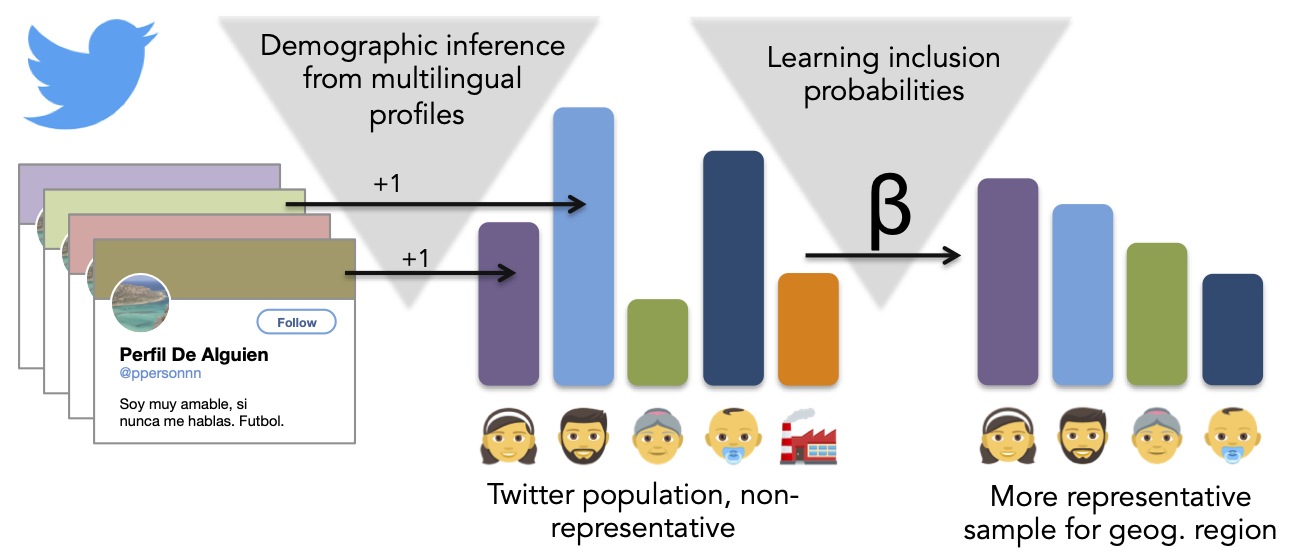

Zijian Wang, Scott A. Hale, David Adelani, Przemyslaw A. Grabowicz, Timo Hartmann, Fabian Flöck, and David Jurgens TheWebConf (WWW), 2019 (also presented at IC2S2 2019) paper / demo / code / pip-installable package / poster |

|

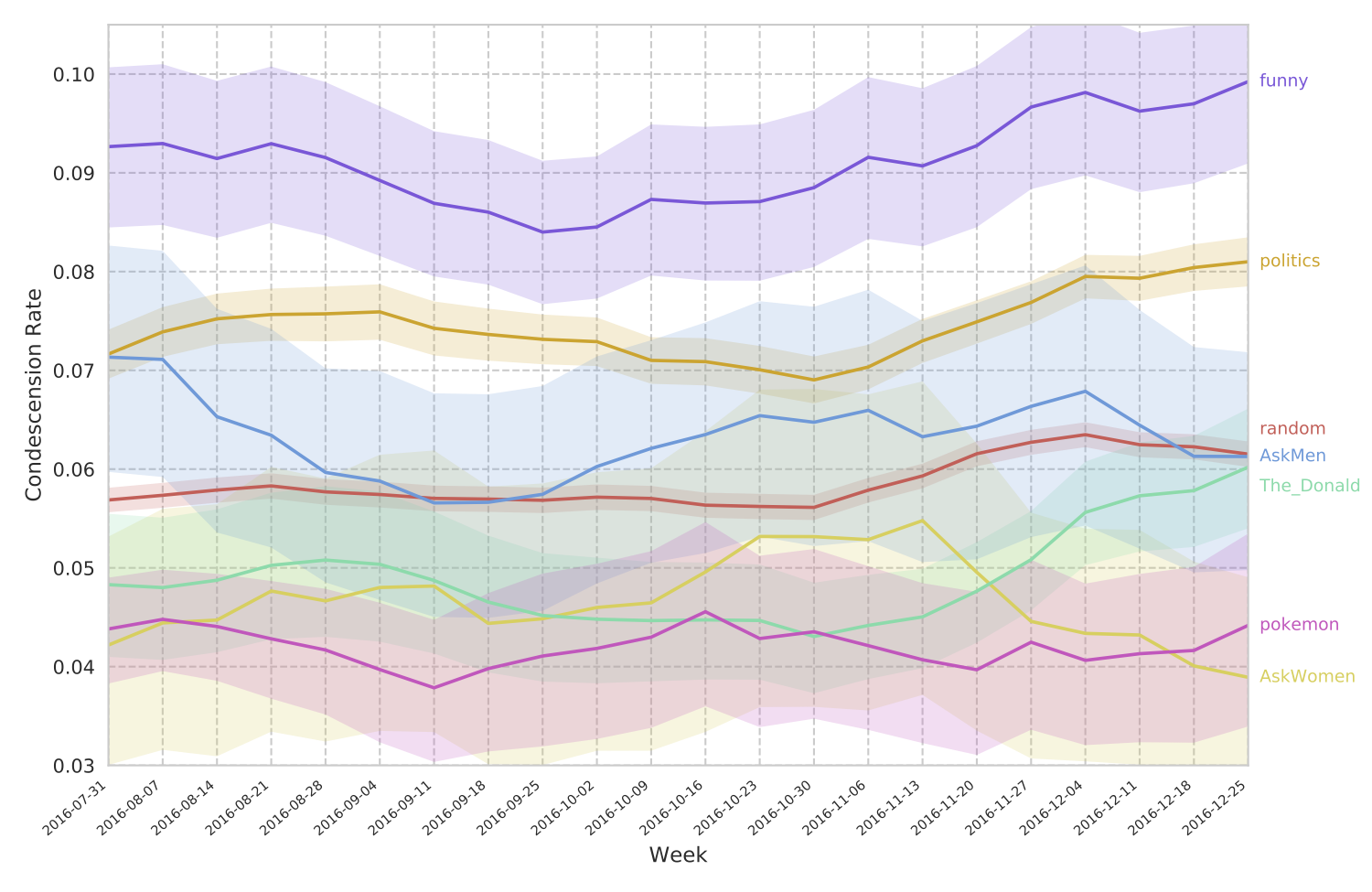

Zijian Wang and David Jurgens EMNLP, 2018 paper / project webpage / pip-installable package |

|

Heeryung Choi, Zijian Wang, Christopher Brooks, Kevyn Collins-Thompson, Beth Glover Reed, and Dale Fitch EDM, 2017 paper |

|

|

|

|